Today we’re pushing live a complete rewrite of the Khan Academy Exercise framework (live demo).

A big push at Khan Academy has been to write more-and-more exercises for students to practice with. Naturally, to increase the number of exercises that we have, we needed to make it easier for team members, and casual committers, to write them.

When I was first looking in to Khan Academy I did a bunch of digging through their code base (which is Open Source) and noticed that a lot of effort was going in to writing exercises. Interestingly all the exercises were being written in pure JavaScript and were dependent upon the server component in order to run properly. To me this seemed like a massive barrier to entry.

Brand New Framework

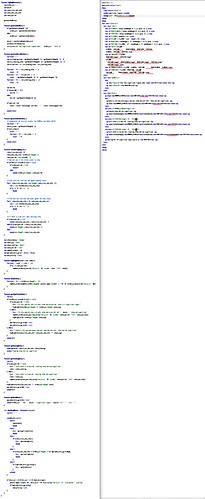

When I started just over two months ago I immediately went about rewriting the framework to use semantic HTML and not rely upon any server-side components whatsoever. To provide an example of the change that was made here is one particular exercise, Significant Figures 1, written for the old framework and written for the new framework. You can view the live version of the exercise, as well.

Significant Figures 1 went from 191 lines of code down to 58.

Note the dramatic lack of “code” – most logic is encapsulated and reduced to a series of variable declarations (some with minimal logic). Additionally utility functions were made significantly more useful, and generic, shared across exercises (some of this was done in the old framework but a complete rewrite allowed us to find areas of obvious overlap).

The result of the new framework is an interesting mix of semantic markup and logic – wrapped in a form of highly specialized templating. Tons of details regarding the specifics of the framework can be found in the Writing Exercises tutorial.

In short, some of the cool new features of this framework include:

- A full module system for dynamically loading specific utilities (such as graphing).

- A brand new templating framework, including templating inheritance, that makes it easy to reduce repeated code in exercises (some examples).

- A brand new graphing framework (written by Ben Alpert, named ‘Graphie’) that’s built on top of Raphael. This framework provides a simple API for creating all sorts of graphs and charts, it’s used extensively throughout the exercises.

- An extensible plugin system for creating new answer types (to expand beyond basic multiple choice or fill in the blank).

Of course, that’s saying nothing of the actual user interaction as well. Most of the new framework’s logic is contained on the client-side and is generated directly off of Khan Academy’s REST API.

By moving logic to the client-side we’re able to have a much more interactive experience (doing all problem generation on the client-side, doing Ajax-y background submissions, things of that sort).

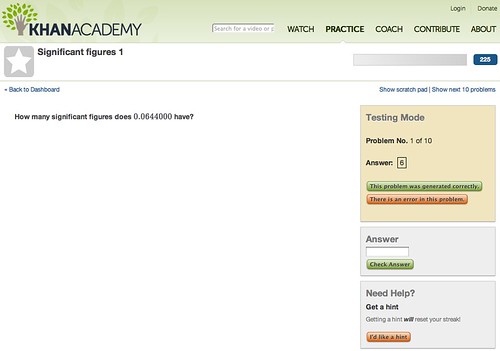

Testing

A big concern for us, with this rewrite, was to ensure that the quality and integrity of exercises was maintained well into the future. We wanted to make sure that the content of the exercises were correct and that regressions do not occur over time.

In fact we have three levels of testing:

- We have unit tests for our modules and utility functions (ensures that stuff like the templating will continue to work correctly).

- We have an interactive interface for generating unit tests for any given exercise (details below).

- We have a “Report a Problem” interface for sending post-deployment issues directly to our bug tracker.

The second stage is particularly interesting. On a fundamental level it’s hard to unit test something as complex as an exercise. While we can ensure that they still work at a functional level we can’t verify if the problems make sense, or if graphs look correct, or if it’s even possible to pick the right answer.

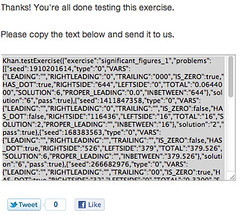

For these reasons we’ve introduced a new testing interface in which we use human developers as unit testers. When in test mode an additional panel is displayed asking the user to provide additional details regarding wether the problem is working correctly, or not. We ask them to really dig into the exercises and make sure that they’re working correctly, hints are being displayed properly, and other things of that nature.

All of this information is collected and distilled into a piece of JavaScript code that is dropped into our test suite, all pushed into QUnit. At the moment we have around 2414 unit test collections with over 10,000 assertions.

At the moment we’re working on ways of having better reporting and integration with our development process, for all those unit tests.

Development Process

I’m rather pleased with how the overall development process worked out. We actively kept both the code open (it’s up on Github and it’s MIT licensed) and the process open as well. We’ve had contributions from over twice as many outside contributors than members of the Khan Academy team (although the vast majority of the development was done by members of the team).

We have a detailed Getting Involved guide that explains how exactly anyone can participate in the development of the framework (or in the authoring of new exercises).

Predominantly we’ve been coordinating through a public HipChat room, using the Github Issue Tracker, and managing all the exercise work through a series of Google Spreadsheets.

We’re going to be transitioning now into creating a ton more exercises and we’d love to have even more help from the community. There is a ton of information on how to get involved in the wiki.

Next Steps and Thanks

There are a few things that we’re looking to do in the near future: Bring offline support to the exercises (for those with spotty internet connections or those using the upcoming iPad/Android application), client-side translation of content into other languages, and exercise answer checking using Node.js and JSDOM.

I also want to take this opportunity to thank everyone at Khan Academy, and all the community contributors, for helping to make this project happen. I especially want to thank Marcia Lee, Ben Alpert, Jeff Ruberg, Igor Terzic, Omar Rizwan, and Ben Kamens for their help in getting this out the door.

I should also mention that Khan Academy is hiring devs. We’re looking for both server/client devs (Python/JavaScript) and also mobile devs (iOS and Android).

Doug Holton (July 28, 2011 at 3:50 pm)

No offense, you guys are doing amazing work, but to create a simple exercise, someone needs to be very fluent in HTML, CSS, Javascript, JQuery, Git, and also know some Python and how to use the command line.

That means teachers for the most part aren’t going to be able to contribute exercises. Even as a developer it seems like there has to be an easier way. (There are actually on other platforms, but the closest I’ve seen for HTML5 so far is http://worrydream.com/Tangle/ )

Also, FYI, SVG (used by Raphael) isn’t supported in android in versions before 3.0 (i.e. on every android phone currently out there). And – I’ve tried to bring this up before – but are any of the exercises accessible? (as required by law in most educational contexts)

John Resig (July 28, 2011 at 4:17 pm)

@Doug: So yes, you do need to know HTML – and probably some logic as well – and really only so much Git as to follow the step-by-step instructions for contributing (which we outline on the site, none of which requires command line access or Python or CSS knowledge or even, necessarily, JavaScript and jQuery knowledge). Granted – you would need to know all of that if you wanted to contribute to the framework itself, but that’s something else entirely.

We’ve had a number of basic contributors providing exercises who know nothing more than the math that they’re building the exercise about. Using our guides they can work their way through the HTML and the basic Git workflow to submit their material. Considering that non-programmers, whose only knowledge is the material itself, are contributing I think it speaks very well to having teachers contribute their knowledge.

As far as SVG support goes, we’re OK having that cut off in place at this point. We’re working on virtually all desktop platforms, working on iOS devices (which is our primary mobile target, at the moment), and I’m sure by the time we get closer to shipping an Android app 3.0 distribution will be farther along. If not we can always adjust the Graphie underlying tech to use Canvas, giving us that additional platform support. That’s a couple days of extra work, tops, not terribly worrisome.

The point about accessibility is a great one. Nothing that we’re doing in the layout of the page is inherently making the page inaccessible – although there is certainly work that we can be doing to make it especially accessible. It’ll likely depend upon what level of accessibility we’re talking about but if we’re talking about people that are hard of sight then we’re perfectly ok right. I’m not sure how well we handle people with different levels of color-blindness, we would need to check into that. As far as blind users go we use MathJax which has built in screen reader support:

http://www.mathjax.org/resources/articles-and-presentations/accessible-pages-with-mathjax/

The rough areas right now are definitely those with charts/graphs (it’s not clear to me how those are presented to blind users outside the context of a computer, let alone inside a browser, although I’d love to learn more).

I definitely want to explore working in ARIA support for the framework, making it much easier for users to handle the interactions that we provide, giving screenreaders even greater levels of insight into our application. I hope to look into that soon.

Shantanu Kumar (July 28, 2011 at 5:24 pm)

Hello, are you working toward eventually supporting QTI for running the exercises?

Brad Goodman (July 28, 2011 at 5:28 pm)

JQuery is awesome, this looks it too

Remember, you said in an old interview yourself that Jquery ‘won’ because of the better documentation than competitors

The clearer the documentation the faster people will uptake

Thanks & Keep Rockin’

Chad (July 28, 2011 at 5:38 pm)

Why not support writing exercises in a wiki style markup?

Scott (July 29, 2011 at 3:15 am)

I feel like the hidden project you’re in-progress on (but not quite done with yet) is a drag-and-drop/modular exercise creator tool. The second you ask that people know *any* amount of programming to contribute, you turn away contributors who aren’t interested in the time investment required to make a single question. A drag and drop creator would eliminate all those issues, and with a new framework all built and ready to be used (and less code needed for the same exercise), there’s got to be a tool in the works.

As a sidenote, thanks for doing what you do best – I can’t tell you how many students I’ve referred to Khan Academy!

Vincent Voyer (July 29, 2011 at 5:53 am)

Hello, very intesresting.

Could you precise on “exercise answer checking using Node.js and JSDOM” ?

I worked with node.js and JSDOM. JSDOM is great but VERY slow, as answer checking must be fast, I’m wondering what you really want to do here ?

Steve (July 29, 2011 at 9:41 am)

Hi,

Its really interesting what you and the Khan academy are doing. I wanted to have a look at code on Kiln you linked to but there seems to be an error when I try and clone the repo using hg:

“abort: repository [git]http://github.com/Khan/khan-exercises.git not found!”

I think there might be a typo that should be https://?

Thanks for all the work, its a brilliant project thats getting better all the time!

Christian Oestreich (July 29, 2011 at 10:56 am)

Looks good so far. I end up doing a lot of the same type of thing at my job here as well. To parlay on what Doug was saying, have you considered created an [optional/default] exercise builder to abstract away having to completely understand all the code components perhaps? Seems like a pretty easy thing to build and have available for those who would need it.

Sean (July 29, 2011 at 11:14 am)

Mr. Resig,

This is all very interesting, but i have a much more important question that needs to be answered.

With all the upgrades you are implementing, will you ever have to reset our energy points?

Thanks!

John Resig (July 29, 2011 at 11:26 am)

@Shantanu: We’re not really looking at QTI support at this time.

@Chad: The major advantage of having it in HTML is that you don’t need any sort of “compile” step before running an exercise – you just open up your browser and you’ll see your live example right there. That’s very compelling.

@Scott, Christian: Having a visual exercise builder is definitely something that we’re exploring. It’s doubtful that it would be able to produce exercise of the level of complexity that see here (it might only be basic question/answer style problems) but it would make it far easier for people to contribute.

@Vincent: Thankfully the validation step doesn’t need to be that fast – we can do it asynchronously and validate the responses when we can.

@Sean: Energy points will not be reset, nor will your streaks. This is just a rewrite of the exercise internals.

Daniel Hendrycks (July 29, 2011 at 11:59 am)

” https://spreadsheets0.google.com/spreadsheet/ccc?authkey=CJWi-LMM&key=0AsgWawUKHSJldGlvX3RUX2FyMEpMdzdRRWlOLXg3TVE&hl=en_US&authkey=CJWi-LMM#gid=8 ”

Could you add more wanted exercises, surely they aren’t the only. I say this because I’d like to try my hand at doing one for Physics.

Frank Noschese (July 29, 2011 at 1:00 pm)

Have you looked at WebAssign? They make it fairly really for teachers to code their own randomized problems. They go way beyond multiple choice and fill in the blank questions, too. For example, some problems require students to make graph (say a system of linear inequalities, with student defined shading) and it can check it on the spot.

John Resig (July 29, 2011 at 3:50 pm)

@Daniel: I think we’re quite open to accepting exercises that aren’t on the list. Pop on over to the HipChat channel and see if it sounds like a good idea!

@Frank: I just looked into WebAssign, per your suggestion. I tried out their sample web interface but I’m not 100% sure how best to use it. Do you have any more details/guides on how to interact with it and create exercises? We definitely want to expand into providing a web interface for generating problems (building off of the technology that we’ve developed here) and I’m definitely curious to see what we can learn from, and how we can improve upon, other technologies.

Daniel Hendrycks (July 29, 2011 at 5:10 pm)

“I think we’re quite open to accepting exercises that aren’t on the list. Pop on over to the HipChat channel and see if it sounds like a good idea!”

Sure thing, thanks for the response.

Chuck Houpt (July 30, 2011 at 1:29 pm)

The client-side, declarative design is great, but why define the exercise formulas as HTML text? It isn’t really semantic HTML, right? For example, a var element is meant to be a single visible variable, not a whole formula which is invisibly interpreted.

This can cause unintentional problems, like “randRange” showing up in search results:

http://www.bing.com/search?q=site%3Awww.khanacademy.org+randRange

Wouldn’t it be just as easy to define the formulas in a JSON-style declaration in a semantically meaningful script element? The code would be something like:

exercise = { vars: {"IS_ZERO": "random() > 0.5", ...}, problems: ..., hints: ...};

Björn Ali Göransson (July 31, 2011 at 8:49 am)

For visually impaired users, graphs need to be read out as tables (sence that’s what they are, semantically).

Paul Harper (August 1, 2011 at 10:02 am)

@John Resig: On the point of color-blindness, I’m pretty glad you bring it up (I am color blind). It’s normally not considered an accessibility issue, but I know from experience that it can be pretty frustrating to figure out what a graph means when you can’t differentiate between the colors.

It seems like with web technology, it would be relatively easy to adjust visuals to use different colors based on the user currently being served. Say I could check a box in my profile saying I’m red-green color blind (there are other types, but this is by far the most common). Then the graphs could automatically adjust to never use red and green (or a mixture of the two) in the same graph. It would even be pretty easy to give a simple color-blindness test over the internet (this would make a great video, in fact).

John Maguire (August 1, 2011 at 12:48 pm)

As a writing teacher, I have taught key concepts about readable writing to freshmen using cardboard panels with sliders in them. I can see how such a slider metaphor could be adapted for Internet use.

For instance, to teach the importance of sentence length, one could present a paragraph written in very long sentences, and allow students to alter the passage to a shorter average sentence length by moving a notched slider. Each different notch would give a version of the passage differently written–and in this case, written with different sentence=length averages. The learning experience comes from reading the mostly identical passages, noting the different degrees of clarity, and noticing what the sentence-length score for that passage is.

But I know nothing about writing the code to produce something like that.

Brock Boland (August 1, 2011 at 9:41 pm)

I went to try out the exercise, thought I found a bug, and instead watched the video and learned that I had been mistaken about significant figures for a long time. I guess that means I need to spend more time with the site!

Frank Noschese (August 2, 2011 at 7:33 pm)

Here’s the WebAssign support page:

http://webassign.net/info/support.html

Linked there is the Question Coding Guide:

http://www.webassign.net/manual/Question_Coding_Reference.pdf

I don’t know how WA takes the specialized HTML-like tags and turns them into proper code, but at least you can get a sense of what is possible. Lots of different questions types that are fairly easy to code.

toadold (August 7, 2011 at 4:35 am)

At the time I am writing this it is August 7, 2011. I’ve got the attention span of a gnat and I’m worried that I’m going to lose the ground that I gained. Can you give us any idea when the exercises will be available again?

Tom (August 10, 2011 at 12:17 am)

Perhaps Sal will make a video set on how to contribute exercises? In all seriousness, it may be a more accessible option for some and could get the word out faster.